Is AI Coaching Support Safe? What You Need to Know About Privacy in 2026

AI coaching tools are now a standard part of professional services, offering features like session insights, emotion analysis, and 24/7 client support. However, privacy concerns are growing as these tools handle sensitive data like personal reflections, financial records, and even biometric information.

Here’s what you need to know:

- Data Collection: Tools gather session transcripts, voice recordings, and even biometric data, raising concerns about storage and misuse.

- Security Risks: Many platforms use opt-out models for training AI, meaning your data may be used unless you disable this feature.

- Transparency Issues: Users often lack clarity on how their data is stored, used, or shared, leading to trust concerns.

- Regulations: U.S. states and global laws are tightening privacy rules, requiring AI platforms to meet stricter standards.

To protect your privacy, choose platforms with strong security features like data encryption and multifactor authentication. Review privacy policies carefully, share only necessary data, and adjust settings to limit data sharing. Balancing the benefits of AI tools with these precautions ensures a safer experience.

AI Privacy Rules You Need to Know Now

sbb-itb-f7e72a6

Main Privacy Risks in AI Coaching

AI coaching tools bring convenience and efficiency but come with a host of privacy concerns. These platforms gather extensive data, ranging from session details to biometric and technical information. Below, we’ll dive into key risks tied to data collection, usage, and the transparency of AI algorithms.

Data Collection and Storage Issues

AI coaching platforms collect a wide array of personal data, including session inputs, voice recordings, text transcripts, authentication tokens, and even biometric data. For example, in June 2025, CoachHub updated AIMY's privacy policy to clarify that it collects session inputs, outputs, voice recordings, and text transcripts, among other data points like authentication tokens from linked apps [2]. Fitness trackers contribute additional metrics, such as heart rate and weight, while technical data like IP addresses and browser settings also feed into these systems [2][8].

Where and how this data is stored varies. Some companies host data on U.S.-based servers, while others opt for European locations, often using SSL encryption for data in transit [7][8]. However, when third-party providers like OpenAI or Anthropic are involved, safeguarding this information becomes a shared responsibility. As Professor Shaojie Tang from the University at Buffalo notes, "Proactively alerting users to potential privacy exposure during interactions with LLMs has become an urgent and practical need" [10]. These varied storage practices can lead to unintended data use and heightened risks of breaches.

Unapproved Data Use and Security Breaches

The sensitive nature of coaching sessions makes unauthorized data use a serious concern, eroding trust between users and platforms. By late 2025, most major AI providers had adopted opt-out training models, meaning your conversations are used to improve systems unless you actively disable this feature [4]. Unfortunately, many users remain unaware of this default setting, inadvertently granting broad permissions [6].

Even opting out doesn’t erase data already incorporated into training models [4]. For instance, Google Gemini retains chat data for up to 72 hours for abuse checks, even when activity tracking is off, while Anthropic may hold onto chats for as long as five years if you’ve opted into training [4]. With ChatGPT amassing 700 million users by August 2025, the sheer volume of personal data stored creates an enticing target for cyberattacks [11].

Lack of Transparency in AI Algorithms

Transparency - or the lack thereof - is another pressing issue. Many AI coaching tools fail to clearly explain how personal data is used or stored. Without this clarity, users have no way of knowing whether their information is being used for training purposes or retained indefinitely [11]. Researchers at Stanford University have highlighted that this lack of transparency can lead to "objective harms", where personal data is repurposed in unexpected ways [11].

"Trust breaks when you go fast, cannot explain decisions, and cannot name an owner." – Ojas Rege, General Manager of Privacy & Data Governance at OneTrust [9]

The rise of "Shadow AI" compounds this problem. Employees using unauthorized AI tools introduce fragmented data governance, leaving no clear accountability for how data is handled or secured [9].

Privacy Laws and Regulations in 2026

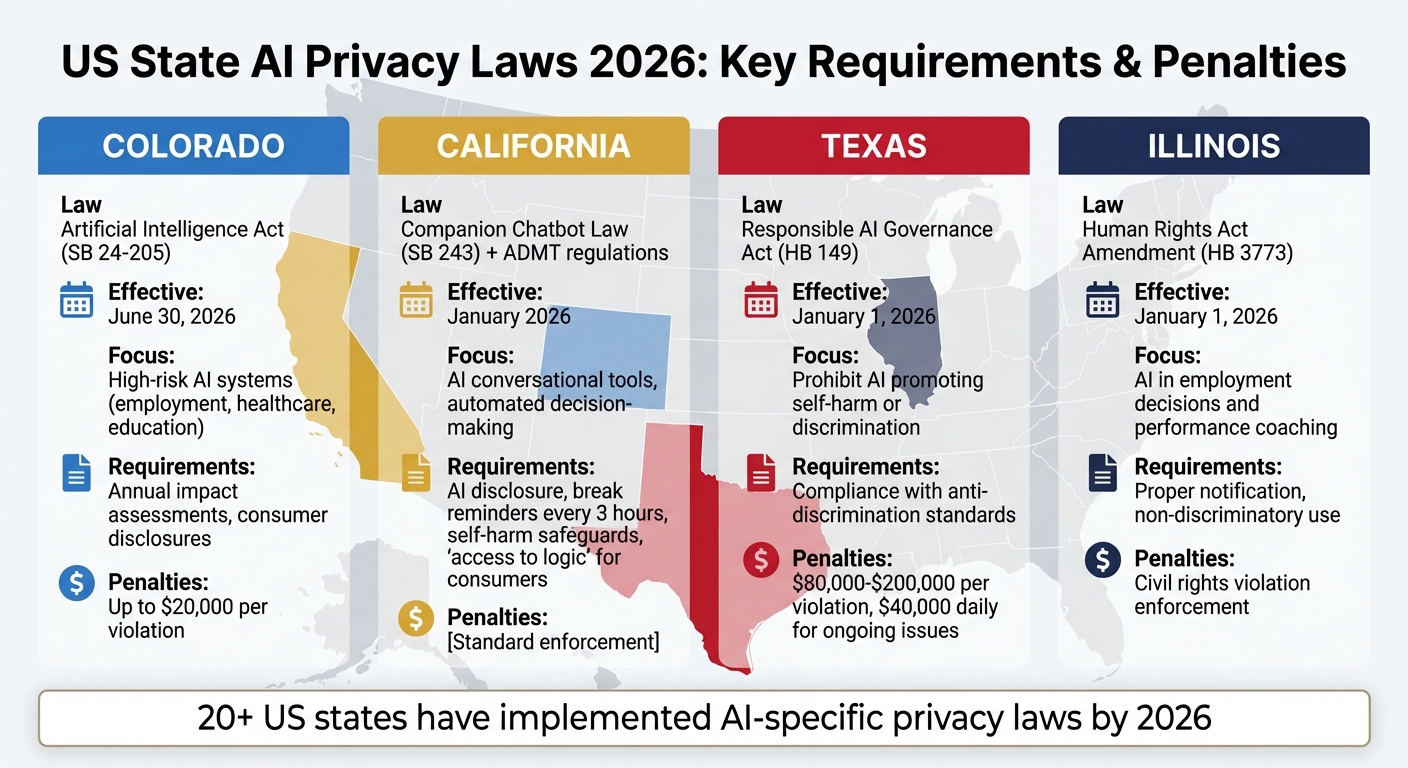

US State AI Privacy Laws 2026: Requirements and Penalties Comparison

By 2026, more than 20 U.S. states have implemented AI-specific privacy laws, many addressing automated decision-making technologies. These laws, combined with global standards, create a challenging compliance environment for AI coaching platforms.

US Privacy Laws Overview

State laws are now directly shaping how AI coaching tools operate. For instance, the Colorado Artificial Intelligence Act (SB 24-205), effective June 30, 2026, focuses on high-risk AI systems in areas like employment, healthcare, and education. This law mandates annual impact assessments and consumer disclosures, with fines reaching up to $20,000 per violation [13][15].

California has been particularly active in AI regulation. Starting January 2026, the Companion Chatbot Law (SB 243) requires AI-driven conversational tools to disclose when users are interacting with AI rather than humans. It also enforces measures like break reminders every three hours and safeguards against self-harm content, especially for minors [15]. Additionally, California's Automated Decision-Making Technology (ADMT) regulations demand that businesses provide consumers with "access to logic", enabling them to understand how automated systems arrive at specific conclusions [14].

Texas has introduced the Responsible AI Governance Act (HB 149), effective January 1, 2026, which prohibits AI systems designed to promote self-harm or unlawful discrimination. Violations can result in penalties ranging from $80,000 to $200,000, with daily fines of up to $40,000 for ongoing issues [15]. Similarly, Illinois has updated its Human Rights Act (HB 3773) to make it a civil rights violation to use AI for employment decisions - such as performance coaching - without proper notification or in a discriminatory way. This amendment also took effect on January 1, 2026 [15].

A 2026 industry report highlights a shift in compliance strategies, moving from "check-the-box" approaches to demonstrating actual performance. Regulators are increasingly auditing backend systems to ensure privacy tools work as intended. For example, in 2025, the California Privacy Protection Agency penalized American Honda Motor Co. for offering a one-click "Accept All" cookies option while requiring users to individually toggle off categories to opt out [16].

As state-level mandates grow stricter, global standards are also evolving to align with these domestic efforts.

Global Privacy Regulation Trends

While U.S. laws set rigorous domestic guidelines, international standards are shaping AI compliance on a global scale. Inspired by U.S. initiatives, international frameworks now include detailed protocols for high-risk AI systems. For example, the EU AI Act, with full requirements for high-risk systems effective by August 2026, uses a tiered risk system that has influenced many U.S. states. AI coaching tools in sensitive areas like healthcare or employment often fall under the "high-risk" category, requiring strict assessments and human oversight [17][18].

The global focus is on human-centric approaches and algorithmic accountability. As Beatriz Peon from OneTrust aptly stated:

"By 2026, AI regulation will be judged by how it is enforced and applied, not by how it is drafted" [18].

This underscores the importance of AI coaching platforms not just having strong privacy policies but also proving that their systems uphold fairness, accountability, and fundamental rights in practice.

Enforcement trends are also intensifying. European authorities have issued over 2,500 fines under GDPR, totaling more than €6.7 billion [19]. Meanwhile, California alone reported a 74% increase in data breaches affecting over 500 residents during the first three weeks of January 2026, compared to the same period in 2025 [14]. This heightened enforcement climate signals real financial risks for non-compliance, while users stand to benefit from enhanced privacy protections.

How Brandbase Protects Your Privacy

As privacy concerns grow in the world of AI coaching, Brandbase takes a proactive approach to address these risks. Through advanced technical measures and clear policies, Brandbase ensures your personal data is protected, giving you full control over how it’s used.

Privacy Features in Brandbase Plans

Brandbase meets SOC 2 compliance standards and uses tools like data encryption to safeguard against unauthorized access. If you’re using Google services, Brandbase follows the Google API Services User Data Policy, adhering to its "Limited Use" requirements to protect any data shared through these integrations.

Each plan - Starter ($18/month + $499 fee), Essential ($99/month + $499 fee), and Pro ($499/month + $499 fee) - comes with secure hosting and authentication features that limit access to sensitive data. Payments are processed via Stripe, a platform compliant with PCI-DSS standards, ensuring that your financial information is handled securely. Additionally, Brandbase works with third-party vendors under strict agreements to ensure your data is only used for its intended purpose.

You also have full control over your personal information. If needed, you can request access to, corrections of, or even the complete deletion of your data by contacting founders@usebrainbase.xyz. Brandbase retains personal data only while your account is active or when required by law. Importantly, sensitive information like social security numbers or health data isn’t processed as part of its regular operations.

These measures reflect Brandbase's dedication to privacy and regulatory standards.

Transparency and Compliance Commitment

Beyond technical protections, Brandbase prioritizes clarity in its privacy practices. The company provides layered documentation, starting with a "Summary of Key Points" in its privacy notice, making its data practices easy to understand. Each major section of the privacy policy includes "In Short" summaries, breaking down complex legal details into digestible explanations.

Brandbase also distinguishes between the types of data it collects. Information you provide - like your name, email, and authentication details - is clearly separated from data collected automatically, such as IP addresses and browser details. This automatically gathered information is primarily used to maintain security and ensure smooth operations.

Steps to Protect Your Privacy

Building on Brandbase's strong privacy measures, you can take additional steps to ensure your data stays secure.

While Brandbase manages the technical aspects of data protection, your actions play a big part in safeguarding your information. Decisions about what to share, which settings to use, and how carefully you review privacy policies can directly impact your security when using AI coaching tools.

Share Only Necessary Data

Before sharing any information, think about whether it’s absolutely needed for your goal. Avoid entering sensitive details like passwords, API keys, full credit card numbers, unpublished medical or legal records, or trade secrets into cloud-based AI tools [4]. As the Fello AI Privacy Guide advises:

"Treat the chat window like a semi-public space. If you wouldn't want it projected in a meeting, don't type it here." [4]

Be intentional with the data you share. Ask yourself if details like a client’s full name or exact birth date are required, or if anonymized information will suffice. This approach reduces the risk of breaches. For generic AI tools like ChatGPT, Claude, or Gemini, opt out of model training by adjusting their privacy settings. For example, ChatGPT users can go to Settings > Data Controls and disable "Improve the model for everyone", while Claude users can toggle off "Help improve Claude" under Settings > Privacy [4].

Review Privacy Policies and Features

Instead of blindly clicking "Accept", take time to review the key terms. Look for specific legal references like "GDPR Article 9" or "HIPAA BAA" to ensure compliance with industry standards. Confirm that encryption protocols meet best practices, such as TLS 1.2+ for data in transit and AES-256 for data at rest [20]. A 2023 study revealed that 78% of top-rated wellness apps claiming "HIPAA-compliant" status failed basic checks, including secure data transmission [20].

Check for clear "zero-training" commitments, which guarantee your data won’t be used to improve external AI models. Determine if the platform is a "data controller" (deciding how data is used) or a "data processor" (acting on your behalf), as this affects how data rights requests are handled [2][12]. Dr. Lena Torres, Former Chief Privacy Officer at the Office for Civil Rights (HHS), stresses:

"Compliance isn't about ticking boxes - it's about demonstrating ongoing accountability. A single BAA means nothing if the vendor can't prove encryption keys are rotated quarterly." [20]

Beyond reading policies, make sure to actively configure the platform’s security settings.

Turn On Security Features

Multifactor authentication (MFA) is a must. Enabling MFA on any AI coaching platform you use can prevent unauthorized access to your session data [3]. Additionally, fine-tune privacy settings by disabling features like unencrypted email summaries or cloud sync if you’re working with sensitive topics. When possible, choose local-only storage [1].

You can also submit a "right to erasure" request to ensure the vendor deletes your data from backups and training sets within the required 30–60 days under GDPR and HIPAA [20]. If you work directly with clients, include AI disclosure terms in your contracts. These should explain how AI tools are used and give clients the option to opt out [1].

Conclusion: Balancing AI Innovation with Privacy

AI coaching tools can be game-changers for service professionals, but their success hinges on one crucial factor: privacy. According to data, 83% of colleagues report noticeable improvements in managers who use AI coaching tools built on secure systems [3]. This highlights a simple truth - privacy isn’t just an add-on; it’s what makes innovation possible.

When selecting an AI coaching platform, it's essential to prioritize those that have privacy baked into their design. Features like user-level data isolation, zero-training commitments, and certifications such as SOC 2 Type 2 or ISO/IEC 27001 are non-negotiable. These privacy-first principles lay the groundwork for trust in AI coaching. For example, platforms like Brandbase ensure that your coaching conversations and client data are protected from the start.

Your own choices also play a role in maintaining privacy. Sharing only the necessary data and enabling multifactor authentication are simple but effective steps. This is especially important when statistics show that 78% of employees turn to personal AI tools to avoid oversight [5]. Balancing a platform's built-in protections with careful data management on your part is key.

"The right AI coach should never reveal private coaching conversations to employers - because learning, reflection, and development only happen when people feel safe to be honest" [5].

Matt Lievertz, VP of Engineering at Cloverleaf, sums it up perfectly. Creating that sense of safety starts with choosing tools that respect your privacy and taking proactive steps to safeguard your information.

FAQs

How can I tell if an AI coaching tool uses my sessions to train its models?

Take a moment to review the tool’s privacy policy or settings to understand how your session data is handled. Look for clear explanations about whether your data is being used for training purposes.

Many platforms offer options like toggles or controls that let you disable this feature. By doing so, you can ensure your data isn’t used to refine or improve their models. Always double-check these settings to maintain control over your personal information.

What security settings should I enable before sharing sensitive coaching data?

Before sharing any sensitive coaching data, it's essential to prioritize your privacy by enabling key security settings. Start by ensuring encryption is in place - both while data is being transmitted and when it's stored. Implement user-level data isolation to keep individual data separate and secure. Additionally, disable permissions that allow AI tools to use your data for training purposes. Take the time to review privacy settings thoroughly, focusing on how data is collected and used for personalization. These precautions can help protect sensitive information and reduce the risk of unauthorized access.

What privacy laws in 2026 most affect AI coaching tools in the U.S.?

Starting in 2026, privacy regulations in the U.S. are tightening, particularly for AI coaching tools. Updates to the California Consumer Privacy Act (CCPA) now classify both neural data and minors' data as sensitive, requiring higher levels of protection. Additionally, California's new AI and privacy legislation, effective January 1, 2026, introduces stricter rules around data transparency and safeguards for AI-driven platforms. These changes highlight a growing focus on protecting user information in the evolving AI landscape.